With the advent of GPU leveraging for neural network type computation, the floodgates are creaking open on new and exciting methods of processing video and still images.

A lot of modern methods are based on a neural network, which is a software program that compares data in virtual representations of a neuron. These neural networks are being “trained”, they are given millions of images and manipulated to weigh particular processing paths as “correct”, or “Learned”.

These models are then used on other images, applying the same computational paths to correct missing colour or detecting edges of colour.

I think that is about as succinct as I can make it. Someone somewhere has fed a video processing model into a Machine Learning algorithm, and then the algorithm repeats, to increase the resolution of an image.

In this post, I’ll be taking a video from YouTube, extracting all the frames, feeding them into a ML upscaler, then stitching them back together. Youtube videos will show the process before and after.

This blog is a work in progress so keep coming back to see updated examples and methods.

There will be several methods shown for each step.

For the sake of transparency blog post is monetised with the Topaz labs affiliate link.

Full list of all software used

Note: You will only need all this software if you plan on completing all my examples.

- Choco

- FFMPEG

- VLC

- Topaz Gigapixel (only $99 USD)

- Adobe Premiere

- Waifu2x

- Docker

- Neural Enhance

- Python

- Gimp

- Gimp ML

- Youtube-dl

This guide is from scratch, and some of the software above do the same thing, and are included for completion. To follow using open source, you would only require FFMPEG, and Python.

I’ll be using Chocolatey, a windows package manager, to simplify installation of non-commercial software.

For the initial test, I downloaded some animated content using youtube-dl. In this case this was the original SouthPark episode

Preparing Content for Machine Learning Upscaling

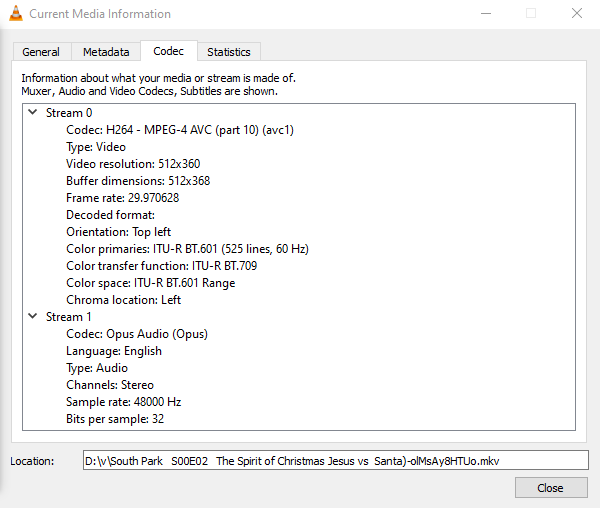

Within VLC, press Cltrl+i to see the Current Media Information and note the Video Framerate

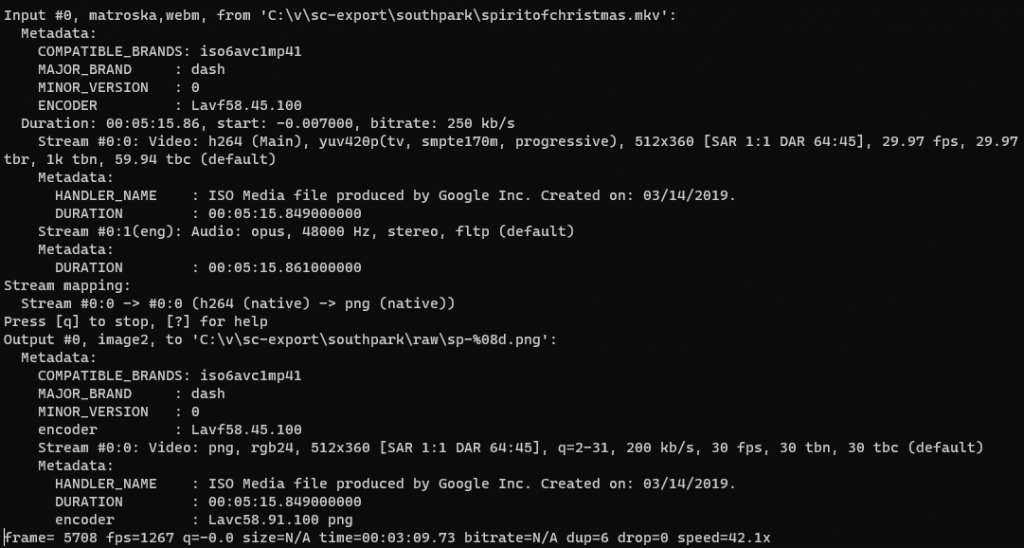

Use FFMPEG to retrieve the still images from video using framerate of 30. We will be importing these frames individually into Topaz Labs Gigapixel to process them.

ffmpeg -i C:\v\sc-export\southpark\spiritofchristmas.mkv -r 30 C:\v\sc-export\southpark\raw\sp-%08d.jpg

Extract the audio using ffmpeg for re-attaching later using either Premiere or ffmpeg.

ffmpeg -i C:\v\sc-export\southpark\spiritofchristmas.mkv C:\v\sc-export\southpark\southpark-audio.aac

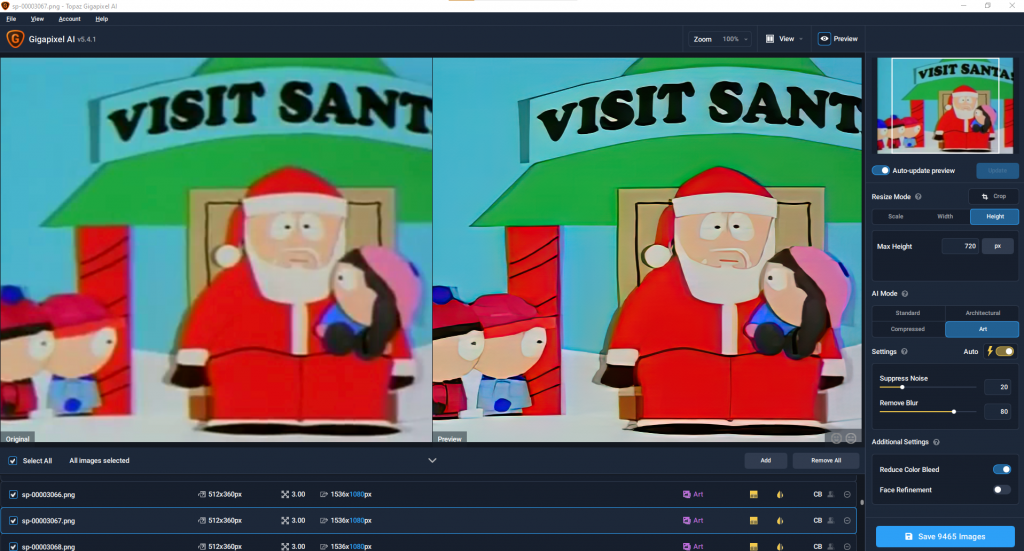

Feeding Images into the AI Upscaling algorithm with Topaz Labs GigaPixel

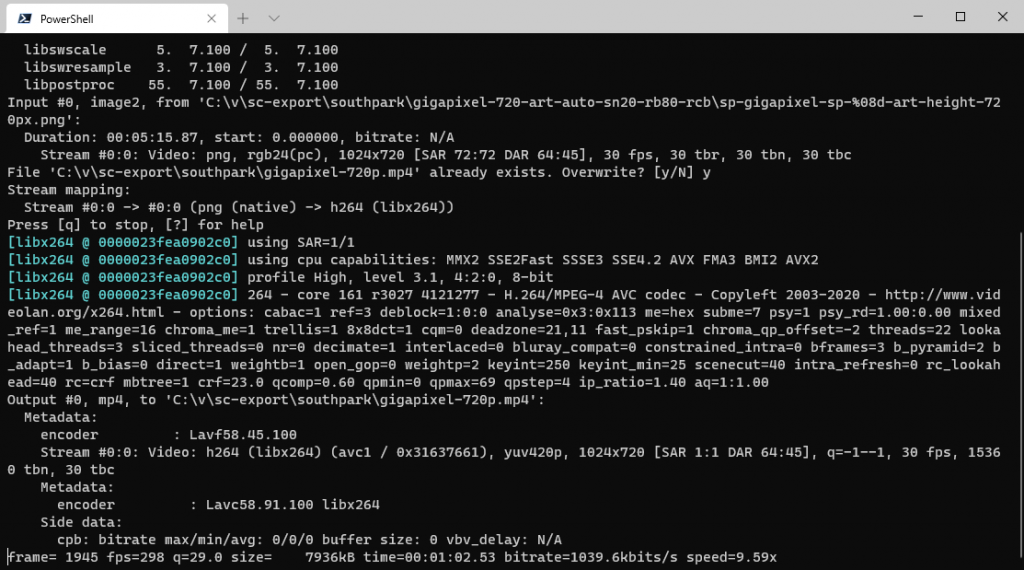

Recombining the images into a video

ffmpeg -f image2 -r 30 -i C:\v\sc-export\southpark\gigapixel-720-art-auto-sn20-rb80-rcb\sp-gigapixel-sp-%08d-art-height-720px.png -c:v libx264 -pix_fmt yuv420p C:\v\sc-export\southpark\gigapixel-720p.mp4

Or, if you need to do this in batches, or want to avoid frames, you can specify a start frame number in ffmpeg to stitch graphics together from a particular point without renaming all the files.

ffmpeg -f image2 -start_number 4696 -r 30 -i "C:\v\sc-export\1-gigapixel\4000-8000-2\sp-gp-rc-fr-0000%04d-gp-std-h720-standard-height-720px.jpeg" -c:v libx264 -pix_fmt yuv420p C:\v\sc-export\gigapixel-clown-720p.mp4

ffmpeg -i C:\v\sc-export\southpark\gigapixel-720p.mp4 -i C:\v\sc-export\southpark\southpark-audio.aac -c copy -map 0:v:0 -map 1:a:0 C:\v\sc-export\southpark\southpark-gigapixel-with-audio.mp4

Using Adobe Premiere to Upscale Camcorder footage, compared to Topazlabs Gigapixel

As well as the individual frame cleaning done using AI driven upscalers such as Topaz Labs Software, we can see how Premiere handles upscaling old camcorder content.

And, for comparison, the frame by frame upscaling using Topaz Labs Machine Learning upscaler

As we can see, Adobe Premiere does a decent job compared to TopazLabs Gigapixel AI, but costs a lot more than Gigapixel AI.

Final Thoughts

Some very good results on the animated block colours by the Machine learning in Gigapixel, but its quite close for Adobe Premiere with video. It seems that possibly with some test renders with gigapixel you could get crisper content.

I’ve started with the (almost) Out of the Box TopazLabs gigapixel AI in the default settings and a straight stretch with Adobe Premiere 2020.

Come back for examples of Waifu and some of the more esoteric ways to upscale video and animated content.

Links:

- https://www.gimp.org/tutorials/Basic_Batch/

- https://deepai.org/publication/gimp-ml-python-plugins-for-using-computer-vision-models-in-gimp

- https://chocolatey.org/install

- https://topazlabs.com/gigapixel-ai/

- http://waifu2x.udp.jp/

- https://github.com/alexjc/neural-enhance

- https://docs.docker.com/docker-for-windows/

- https://ffmpeg.org/

- https://www.videolan.org/vlc/index.en-GB.html

- https://github.com/topics/upscaling